Getting Humans to Trust Unpredictable AI: Boosting AI Engagement by 63%

- AI

- SaaS

- GenAI

- MarTech

Company

Leads.new

Challenge

How do you give someone enough trust in a system they can't fully control to actually press publish?

Team

- Co-founder (me)

- Co-founder

- Mid-level designer

- Engineer

- CMO

- Marketing managers

Skills / My role

- Founding product designer

- UX design

- Design systems

- Product discovery

- AI-assisted prototyping

- Leadership & mentorship

- React & Node.js

Impact

AI feature engagement

Deployment rate

Time to publish

Leads.new: AI builder for hyper-personalised AI lead magnets

Marketers have been making lead magnets for years — free resources you give away in exchange for an email address. A PDF guide, a calculator, a quiz. They work, but they take ages to build and once they're published, they just sit there static, providing the same experience for every person who clicks.

Tools like Typeform, ScoreApp, and Perspective have tried to make this easier, but personalisation is still pretty constrained. We looked at generative AI and saw an opportunity: lead magnets that could be built in minutes and that gave every lead a genuinely personalised experience. Interactive microsites — quizzes, free web tools — powered by LLMs that could respond intelligently to each person.

I co-founded Leads.new as the founding product designer to figure out if this could work.

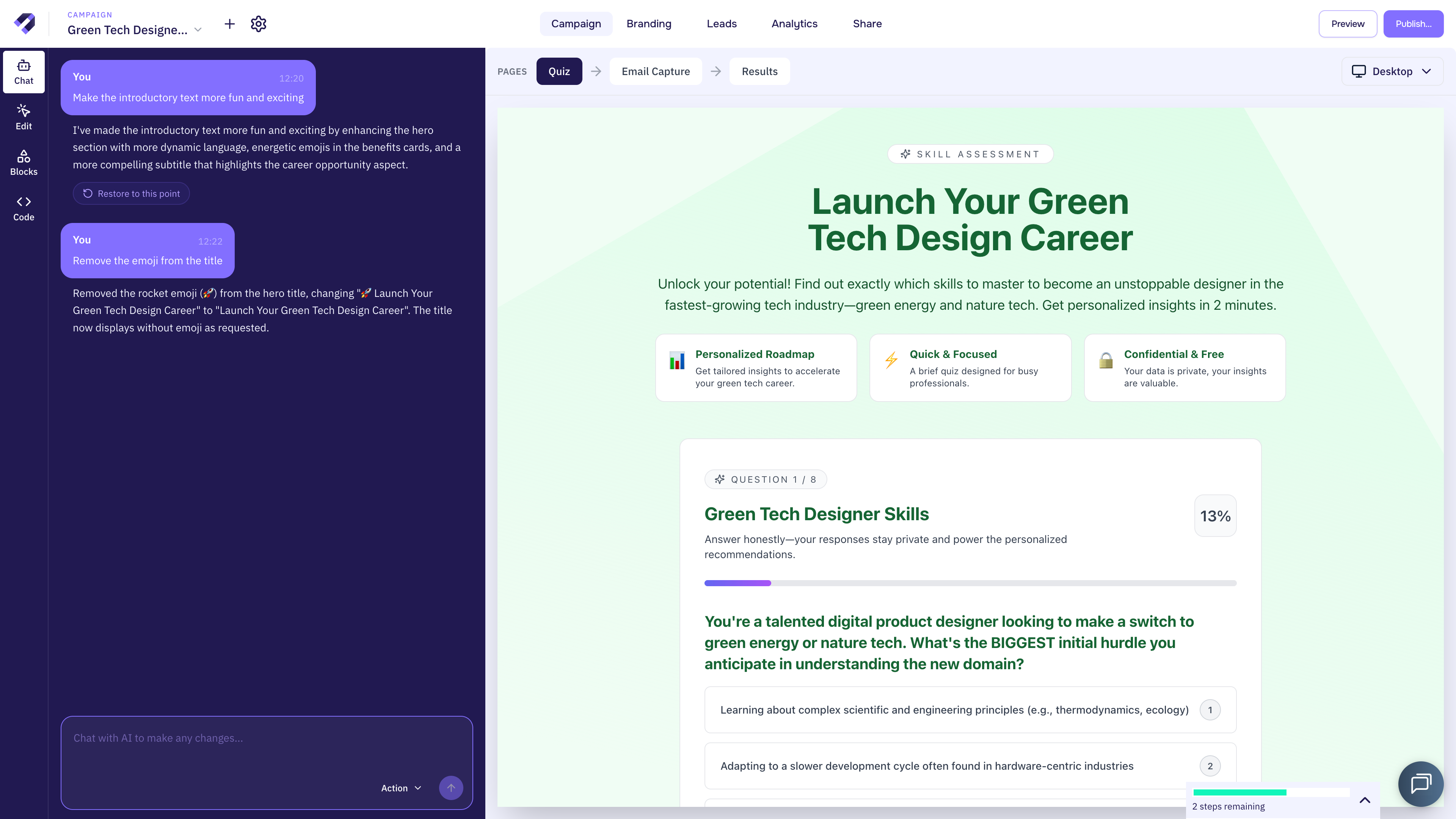

The AI trust challenge: How do you give marketers enough trust to publish a system they can't fully control?

LLMs are not deterministic. And in Leads.new, the AI was unpredictable in two directions at once. It generated the microsite itself — the styling, the copy, the interactive elements. And then, when a lead actually used the microsite, it generated personalised responses in real time. So we were designing for two users simultaneously: the marketer creating the thing, and their potential customer experiencing it.

Which meant the marketer's reputation was riding on outputs they hadn't written. Every time someone's lead interacted with their AI-powered lead magnet, the marketer was trusting the system not to say something stupid, off-brand, or just plain wrong.

The design challenge was about building trust in the system. How do you give someone enough trust in a system they can't fully control to actually press publish?

That question, it turns out, is relevant well beyond marketing. It's the same question facing anyone deploying AI where the output carries real consequences — in carbon reporting, energy forecasting, biodiversity assessment. Anywhere getting it wrong has regulatory or financial weight, not just reputational.

What we learned from users

We ran customer discovery calls, user testing sessions, and spent a lot of time watching PostHog screen recordings — seeing where people hesitated, got stuck, or quietly gave up. Four things came up.

Marketers wanted all the data, not just an email address

We'd assumed marketers would be happy just collecting an email address. But, they actually wanted every piece of data the lead entered, plus the AI-generated response the lead received. The lead magnet wasn't just a collection tool for them. It was an intelligence tool.

Marketers were terrified of hallucinations

One wrong AI output sent to a potential customer could damage their brand. This wasn't a background worry — it was the thing that stopped people deploying and sharing their lead magnets. In the screen recordings, you could see it: marketers previewing their lead magnets over and over, testing edge cases, then closing the tab without publishing.

Leads needed lead magnets to look on brand

Brand consistency mattered enormously. An AI-generated lead magnet that looked like a third-party tool was a non-starter. The theming had to cascade from a company level down to every lead magnet they created. Leads wouldn't trust lead magnets if they didn't look like the brand.

Marketers didn't know how to work with AI

New users arrived and didn't know where to start. They didn't understand what they could change or how to shape what the AI had generated. Our user testing sessions showed hesitation, aimless clicking, abandonment. This was a critical bottleneck — low engagement in the builder kept deployment rates low.

Designing the solutions

We thought about the product as a pipeline. People were coming in — so where exactly were we losing them? People were building lead magnets but not editing or publishing them. Our research insight pointed somewhere specific.

Lead intelligence dashboard

We built a lead intelligence dashboard. Everything the lead entered, plus the AI response they received, surfaced clearly in one place. Marketers could review the full picture of every interaction — not just names and emails, but the rich qualitative data the AI-powered experience had generated. This built trust in the system for marketers as they could keep an eye on the AI's performance.

Blocks — granular AI governance

We developed something we called Blocks. Marketers could write specific prompts for each section of their lead magnet, constraining the AI's behaviour block by block. For one section, the AI might have creative freedom. For another, it could only respond with one of a predefined set of answers. This gave marketers granular control over exactly where the AI had room to move and where it didn't. It turned a scary, open-ended system into something they could reason about.

Automatic brand agent

We built an agent that pulled brand assets and colours directly from the marketer's website and applied them automatically. Theming cascaded from company level across all their lead magnets without anyone touching a colour picker. The microsite looked like theirs from the start.

Training wheels to build confidence in a new system

This one required us to kill something we'd already built. Our initial design gave marketers a comprehensive workflow management view — lots of options, lots of control. It overwhelmed them. So we scrapped it.

Our research pointed at a handful of key areas to improve:

- Educate marketers about the AI's capabilities and limitations through a welcome modal while they wait for the AI to build.

- Surface tiny suggested prompts to show marketers what they can ask the AI to do for them.

- Make actions reversible so marketers feel safe to experiment.

- Allow non-destructive questions in the AI chat so marketers can ask the AI to explain its reasoning.

- Drop them right into their generated lead magnet so they can see what the AI has made for them getting them to the AHA moment as fast as possible.

Scaling design through systems and principles

At some point I realised I was becoming the bottleneck. Every design decision was running through me, and that wasn't going to scale.

So I built a design system on Tailwind — but not just as a component library. It was a shared language for the whole team, including our AI coding agents. I wrote design principles that we could use to align decisions across design, engineering, and product. Then I encoded both the system and the principles into the codebase itself.

What this meant in practice: when AI coding agents generated code, they made decisions that were consistent with our design language automatically. The principles did the work even when I wasn't reviewing the output. The team got faster and the quality held.

Using AI to build Gen AI

I used Cursor and Claude Code directly on the main codebase. Not as a separate prototyping environment — on the actual product. This meant we could go from a customer insight to a working prototype absurdly fast.

There's one example that sticks with me. After a customer discovery call surfaced a clear need, we had a working prototype built within three hours and got feedback from that same customer the same day. A real, functional thing they could use, tested with the person who'd told us about the problem that morning. In this case, the protoype helped us surface a misunderstanding of the customer's needs. Which we were able to fix quickly and get a working prototype out the door. Which we shipped the next day.

Leadership and mentorship

As founding product designer, I set the design culture for the company. I led clients through discovery and onboarding, which meant I was often the first person they worked with and the person who shaped their understanding of what the product could do for them. I was also the advocate for design process on the team, which at an early-stage startup mostly means convincing people who want to move fast that spending time on research and testing isn't slowing things down. It's preventing you from building the wrong thing quickly. That argument never fully goes away, but over time the team saw enough examples of research changing direction that the process stopped being something I had to defend.

I worked closely with a freelance mid-level designer throughout the project. I reviewed their work, paired with them on problems, sat in on their workshops, set the scope of their projects, and helped define their north stars. The goal was for them to have enough clarity on our principles and direction to make good calls on their own.

Influencing cross-functionally

The product had two types of end user, but selling it was a different story entirely. Getting a lead magnet deployed inside a company meant navigating CMOs who got the vision, product marketing managers who scrutinised the UX and brand fit, sales development reps who needed workflow integration, finance teams who controlled the budget, and sometimes legal teams who wanted to know exactly what the AI might say to their customers.

That last one taught me something I didn't expect. When a lawyer asks “what are the edges of what this system might generate?” — that's not a UX question. That's an auditability question. I learned to design for explainability because the sales process demanded it, long before I would have thought to prioritise it myself. In hindsight, it's the most transferable skill I picked up.

A significant internal battle was with my co-founder. I believed the product needed clear navigation through every stage of a lead magnet for marketers to trust it enough to deploy. He thought the added complexity wasn't worth it. I didn't try to win the argument with words. I built prototypes, put them in front of him, and let him feel the difference. He came around. The feature shipped with positive feedback from our customers.

Impact

AI feature engagement

Deployment rate

Time to publish

What went wrong

We were slow on customer education. The product was genuinely new — nobody had seen AI-generated lead magnets before — and we assumed people would figure it out on their own. But they didn't. We needed to teach them what was possible before they could shape it to their needs, and we got to that realisation later than we should have. There was a tension between letting them build what they wanted, teaching them what was possible and creating a great first impression.

The build process broke sometimes. When it happened, it happened at the worst possible moment — the user's first experience. We were too slow to prioritise reliability over new features.

And we didn't create the Aha moment fast enough. The redesigned onboarding fixed this, but the lesson was expensive: in AI products, the first generated output has to be impressive immediately. You don't get a second chance to show someone the magic.

Building trustworthy AI systems to accelerate the green energy and nature tech transition

Many of the lessons I learned at Leads.new about helping people trust AI systems applies directly to green tech. But the stakes are different, and that matters.

In marketing, a bad AI output embarrasses someone. In green energy, it could mean flawed grid predictions that affect supply. In nature tech, it could mean inaccurate biodiversity reporting that misleads regulators or investors. Trust is more that a user experience problem in these domains, it's also a compliance and accountability problem.

Preview and testing flows so users see the edges of AI output before it goes live. Prompt architectures and guardrails like Blocks that give domain experts granular governance over how the AI behaves. Interfaces that make invisible systems legible. And pipeline thinking — always finding the bottleneck that actually matters rather than optimising something that doesn't.

The underlying question with Gen AI is always the same. People need to understand the variability in AI output before they'll trust it. And the higher the stakes of that output, the more visibility and guardrails they'll need.

Building something where AI trust matters?

Whether you're in green energy, nature tech, or any domain where AI output carries real consequences — I can help you design systems people will actually trust and deploy.

Get in touch